Chad Rubin

May 2, 2026 · 12 min read

Most "rule-based vs AI repricing" debates online are pitched as a binary. One side is faster, smarter, cheaper. The other is a relic. That framing is wrong, and it costs operators money.

Both approaches work. Both fail. They fail in different conditions, on different SKUs, and at different points in an account's growth curve. The sellers I work with who treat this as a religious war tend to leave margin on the table. The ones who treat it as a tooling question (right tool for the right SKU) compound margin instead.

This is the operator's breakdown for 2026. What rule-based actually does well, what it cannot do, where AI repricing earns its higher SaaS bill, and where it quietly burns cash unsupervised.

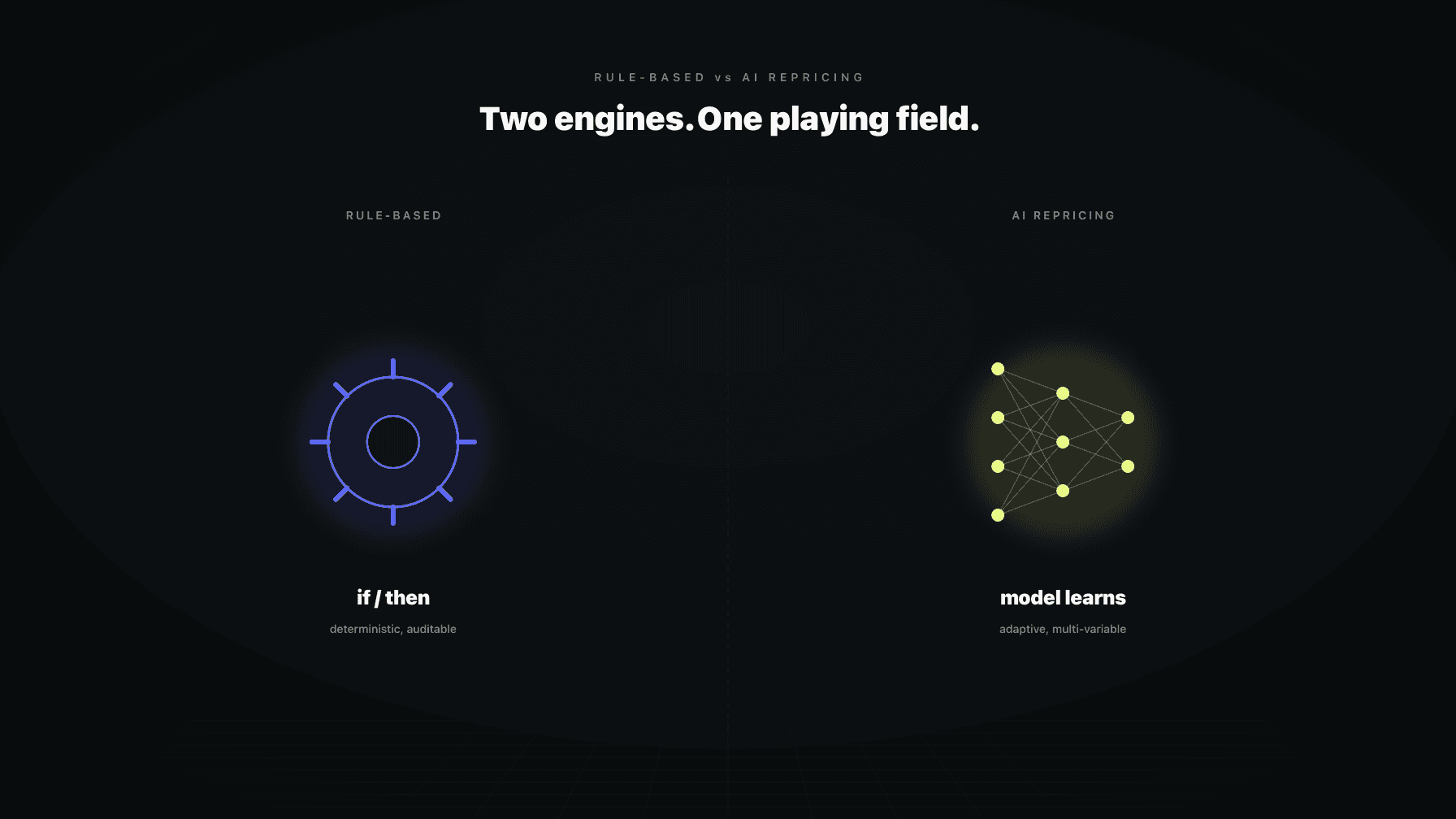

"Rule-based" and "AI" are not opposites. Rules are still inside every AI repricer worth using, and most modern rule-based tools have a statistical model under the hood. The honest comparison is about who makes the pricing decision: a human-authored if/then tree, or a model trained on your data.

## Key takeaways >- Rule-based repricing wins in stable categories with simple competitive landscapes and predictable patterns. It is auditable, predictable, and cheap to operate.- AI repricing wins when there are too many variables to encode by hand: seasonality, Buy Box rotation logic, competitor pattern shifts, large catalogs.- Rule-based breaks when the rule writer cannot keep up with reality. Categories shift, competitors change behavior, and a frozen rule set quietly leaks margin.- AI repricing breaks on cold-start SKUs, on edge cases the model has never seen, and any time your COGS or target inputs are wrong. Garbage in, expensive garbage out.- The mature setup is hybrid. Rules act as guardrails (floor, ceiling, MAP, brand-specific blocks). AI handles the decision inside those guardrails. An audit log sits on top so a human can read why each price moved.- The cost discussion is rarely honest. Rule-based is cheap on the SaaS line and expensive in lost margin. AI repricing is the inverse: higher SaaS cost, tighter margin, fewer "why did we lose the Buy Box for three days" incidents.- Pick by category and account size, not by ideology. Small catalogs in stable niches do fine on rules. Large catalogs in volatile niches need a model.

Rule-based repricing is deterministic. You write if/then conditions and the tool executes them on a schedule. The simplest version: "match the lowest FBA competitor minus one cent, never go below floor, never above ceiling." More elaborate versions layer in conditions: who has the Buy Box, FBA or FBM, known resellers to skip, current stock on hand, active sale or coupon.

The strength is predictability. You can read the rule and audit the decision. If something looks wrong, you trace the conditions and find the branch that fired. There is no hidden state.

The weakness is also predictability. The rule does what the rule says. If the world changes and the rule writer does not, the rule keeps doing the wrong thing efficiently. A category with 4 reseller competitors last quarter and 11 this quarter is not the same category, and the rule tuned to the first version is now mispriced against the second.

Weekly insights on AI, Amazon operations, and profit optimization.

Founder & CEO, Profasee

Ran a 7-figure Amazon brand for a decade. Founded Skubana (acquired). Co-founded Prosper Show. 15+ years on Amazon.

Join the brands that replaced agencies and tools with AI employees.

AI repricing replaces the if/then with a model that learns from data. Inputs typically include historical sales velocity, conversion rate at different prices, competitor price moves, Buy Box wins and losses, time of day patterns, inventory position, traffic, and any external signal the vendor plumbs in (reviews, ratings, search rank).

The model estimates two things. First, the relationship between price and demand for this SKU. Second, the relationship between price and Buy Box probability against the current competitive set. It picks a price that maximizes whatever you told it to optimize.

The strength is multi-variable optimization. A human cannot hold 30 inputs in their head and recompute the right price every 15 minutes across 4,000 SKUs. A model can. With clean data, it finds price points a rule writer would never test.

The weakness is opacity. The model only knows what it has been shown. It can be confidently wrong on a SKU it has never priced, and it chases bad signals if upstream data is dirty.

Rule-based wins anywhere the world is stable and the rule writer is informed.

Stable categories are the obvious case. Slow-moving consumables, replacement parts, accessory SKUs, items where the competitive set has not changed materially in 18 months. The price-demand curve is roughly flat in the relevant band. You set a floor, a ceiling, and a Buy Box matching rule, and you get out of the way.

Simple competitive landscapes also favor rules. If you have one or two consistent competitors and you know their behavior, a hand-written rule holds the Buy Box more cheaply than a model. Paying a higher SaaS fee for a model to confirm what you already encoded is a waste.

Regulated price floors are a third strong case. MAP-protected products, brand-controlled SKUs, anything where the legal floor is more important than the optimal price. Rules express a hard floor cleanly and are easier to defend in a brand call.

Finally, rules win when one person can hold the whole catalog in their head. If you have 40 SKUs and you actually know each one, a rule set you wrote yourself will outperform a model trained on thin data.

Rule-based breaks the moment reality outpaces the rule writer.

Volatile categories are the first failure mode. Toys, electronics, apparel, anything seasonal, anything tied to a tentpole event. Most operators do not revisit their rules quarterly. They write a rule once, paste it across SKUs, and forget. By month nine the rules are quietly losing money.

Multi-variable signals are the second failure mode. If the right price depends on inventory cover, competitor density, day of week, recent review volume, and current ad spend, no rule tree captures it cleanly. You end up with a tangled mess of conditions nobody on the team can debug.

Seasonality is a special case. A simple "weekend bump" schedule works for some categories. For others, peak demand starts on a Wednesday three weeks before a holiday and trails off non-linearly afterward. Encoding that by hand is fragile.

Competitor pattern shifts kill rule-based pricing quietly. If a major competitor switches from FBM to FBA, or starts running coupons on a different cadence, your "match the lowest FBA competitor" rule is now matching a different curve. You notice when Buy Box share drops and units slow. By then you have leaked weeks of margin.

AI repricing wins when the problem has more variables than a human can hold and more SKUs than a team can babysit.

Multi-variable optimization is the headline use case. A model that weighs price, conversion, Buy Box probability, inventory position, and competitor behavior together finds prices that a rule writer would not. This is most visible on mid-velocity SKUs, where a small price change has a large unit impact.

Seasonality detection is the second clear win. A model trained on multiple years of data picks up patterns the rule writer would not notice: a slow ramp two weeks before category-wide demand, an elasticity shift on the back side of a holiday, a regional pattern that does not match the national one.

Latent Buy Box variables are a third advantage. Amazon's Buy Box logic is a multi-input function that includes seller metrics, fulfillment speed, stock depth, and other variables Amazon does not document. A model trained on Buy Box wins and losses can learn the function as it operates today.

Large catalogs are the practical win. A team that can hand-tune 50 SKUs cannot hand-tune 5,000. AI repricing scales because the same model handles every SKU. Operators with broad assortments, private label plus reseller mix, or rapid SKU expansion need this.

AI repricing has real failure modes, and vendors do not love talking about them.

Cold start is the obvious one. A brand-new SKU has no sales history, no Buy Box history, no conversion data. The model has nothing to optimize against. Run a rule on it for the first month or two, or accept that the model is guessing inside a wide band.

Edge cases are the second. SKUs with extreme seasonality, regulated categories, hardware launches or brand events. The model trained on the broad catalog has not seen these and may chase a noisy signal. A typical 7-figure brand has a long tail of SKUs that look weird to a model, and that tail is where AI repricers most often misbehave.

Bad inputs are the most common failure, and the most under-discussed. If your COGS is wrong by 12 percent, the model optimizes toward what it thinks is profit and what is actually loss. AI repricers are very good at executing whatever you tell them. That is also the problem.

Finally, AI repricers can be hard to audit. If your repricer cannot show you the inputs that drove a decision, you cannot defend it to a brand owner, and you cannot tune it.

The setup that wins in practice on 7-figure and 8-figure accounts is not pure rule-based or pure AI. It is a hybrid.

Rules become guardrails. Floor, ceiling, MAP enforcement, brand-specific blocks, "do not match this seller," "do not move price during a flash sale window," "do not exceed plus or minus 8 percent in 24 hours." Deterministic, auditable, easy to defend in a brand conversation.

AI becomes the decision engine inside the guardrails. The model picks the price, but only inside the band the rules allow. Rules cap the downside. The model captures the upside.

An audit log sits on top of both. Every price change is logged with the inputs that drove it: which rule fired, what the model proposed, why the final price landed where it did. A pricing analyst can read the log and either trust the system or override it. This is the boring infrastructure that makes the difference between an account that uses AI repricing well and one that "tried AI but it kept doing weird stuff."

The honest cost comparison is rarely shown in vendor decks.

Rule-based has a low SaaS sticker. On the surface, the cheap option. The cost that goes unmeasured is the margin leak. A rule that is even slightly wrong, run at scale across thousands of SKUs for months, gives back more profit than the SaaS savings ever recovered. It does not show up on the invoice. It shows up as flat margin in a category that should have grown.

AI repricing has a higher SaaS sticker. The trade is tighter margin, fewer Buy Box incidents, less manual rule maintenance. Think in total cost: SaaS bill plus headcount on rule tuning plus margin gap. On large catalogs, AI repricing usually comes out cheaper on the total line.

Cheap rule-based you never tune is not cheap. Expensive AI you never feed clean inputs to is wasteful. Model total cost across both options for 12 months. The conversation gets less ideological once that math is on the table.

A practical rubric:

Under roughly 200 SKUs in stable categories with a small competitive set: run rule-based. Tune it quarterly. You do not need a model. You need discipline.

200 to 2,000 SKUs across mixed categories: run hybrid. AI on the volatile or mid-velocity SKUs, rules on the slow-movers and the brand-protected items. Set floor and ceiling rules across everything as a safety net.

Over 2,000 SKUs, especially across categories with seasonality or churn: run AI repricing as the default with rule-based guardrails. Hand-tuning at this scale is impossible.

Account stage matters too. A new account has thin data, and AI repricing on cold-start SKUs is mostly noise. Run rules until you have 60 to 90 days of history, then switch SKUs that have earned the data over to a model.

One question separates the serious vendors from the hype merchants. Ask: "show me the audit log for a single SKU's price changes over the last 14 days, with the inputs that drove each decision."

If the vendor cannot show you that, the tool is a black box and you should not buy it. You will be unable to defend a single price change to a brand owner or your own team, and you will have no way to debug a margin leak.

If the vendor can show you that, look at the inputs. Is COGS in there. Is inventory cover in there. Is Buy Box state in there. Is competitor density in there. Are the inputs current, or stale by a day. Quality of inputs determines quality of decision. Model architecture matters less than data plumbing.

One more question: "what does your tool do when my COGS is wrong." A serious vendor has an answer. A hype vendor will tell you their model is so smart it will figure it out. The first answer is true. The second is how operators lose money confidently.

Profasee runs the hybrid pattern as the default. Oracle, our pricing AI employee, makes price decisions using a model trained on your account's data plus broader market signal. Rules sit around it as guardrails: floor, ceiling, MAP, brand-specific blocks, and any constraint your team encodes. Every price change is logged with the inputs that drove it.

The piece most repricers miss is coordination. Pricing decisions affect ad performance and inventory burn. Oracle does not move alone. Marko sees the price changes and adjusts bids in the same loop, so a price drop does not silently widen ACoS. Bruno sees the same changes and updates demand forecasts and reorder timing, so a price-driven velocity bump does not turn into a stockout. The three agents share state through the same data layer.

The question to ask is not "is AI repricing better than rules." It is "is my pricing engine coordinated with my ads engine and my inventory engine, with rules as guardrails and a clean audit log on top." If the answer is no, the upgrade is the system, not the algorithm.

Not universally. AI repricing wins on large catalogs, volatile categories, and cases where the right price depends on more variables than a human can encode. Rule-based wins on stable categories, small catalogs, MAP-protected SKUs, and cases where auditability matters more than optimization. Most mature accounts run both: rules as guardrails and AI as the decision engine inside those guardrails. Picking one on principle leaves money on the table either way. Pick by category, account size, and data quality.

In most cases yes, at least to start. If you have under roughly 200 SKUs in a stable category, a well-written rule set will outperform a thinly-trained model. AI repricers need volume to find signal. A small catalog without much sales history does not give the model enough to learn from, and the result is confident-looking decisions on weak data. Run rules, tune quarterly, and migrate SKUs to AI repricing once you have 60 to 90 days of clean data plus the volume to make the upgrade worth the SaaS bill.

Yes, and on mature accounts this is the default setup. The pattern is rules as guardrails, AI as decisions, audit log on top. Rules express the hard constraints: floor, ceiling, MAP, do-not-match sellers, blackout windows. AI picks the price inside the band the rules allow. The audit log shows which rule fired and what the model proposed, so a human can read every decision after the fact. This hybrid is harder to set up than either pure approach, but it is meaningfully better in production. Most serious repricing tools support some version of it.

A trained model treats the Buy Box as a probability function rather than a fixed comparison. Inputs typically include price relative to competitors, fulfillment method, seller metrics, stock depth, and recent Buy Box history on that SKU. The model estimates the probability of winning the Buy Box at different prices and picks one that balances win-rate with margin. Rule-based tools match competitors but cannot model probability. The practical difference is that AI repricing tends to hold Buy Box at higher prices, because it knows when matching is unnecessary. The catch: bad data in, bad probability out.

Dynamic pricing is the broad category: any system that changes price in response to conditions, including hand-written rules on a schedule. AI repricing is a subset where the decision is made by a model trained on data. All AI repricing is dynamic pricing. Not all dynamic pricing is AI. The distinction matters because vendors use the terms loosely. A tool that calls itself "dynamic pricing" might be a rule engine with a calendar, and one that calls itself "AI" might be a rule engine with a regression bolted on. Ask for the audit log to find out which it is.

Three reasons. Auditability: a rule-based decision is easy to defend in a brand call. Stability: in a category that does not change much, a rule will quietly do the right thing without learning anything. Cold-start coverage: a brand-new SKU with no history gives a model nothing to work with, so a rule is the safer default for the first 60 to 90 days. Beyond that, the strongest case for keeping rules is using them as guardrails on top of an AI engine. The hybrid is the answer, not "AI replaces rules."

Watch four signals. Buy Box share trending down on SKUs that used to hold it. Margin compression on SKUs where COGS and competitive set have not changed. Velocity drops without a category-wide drop. Price changes that surprise your team when they look at the log. If any of these show up, the repricer is misfiring. The fastest diagnostic is the audit log: pick five SKUs that look wrong, read the input chain that led to the decision, and check whether the inputs were correct. Most "the AI is dumb" complaints turn out to be "the COGS file was stale."