Chad Rubin

April 14, 2026 · Updated May 11, 2026 · 10 min read

Operator notes by email

Short, opinionated takes on AI agents, Amazon PPC, pricing, and inventory. No fluff. About once a week.

AI employees for Amazon sellers are named, role-based AI agents that own specific operational domains (PPC, pricing, inventory, listings) and coordinate decisions across functions automatically. Unlike standalone AI tools that optimize one thing in isolation, AI employees share data, react to each other's decisions, and operate as a team inside seller-defined guardrails.

If you are still running your Amazon business with four separate AI tools that do not talk to each other, you are solving a 2024 problem with 2024 architecture. The tools got smarter. The coordination did not.

I built Profasee because I lived this problem for a decade. Seven figures in revenue, five disconnected tools, three hours every morning wondering what they all did overnight. The tools were individually excellent. Together, they were chaos.

This article explains what AI employees actually are, how they differ from the tools you are already using, and why the shift from standalone automation to coordinated AI teams is happening now.

Key Takeaways

The term "AI employee" gets thrown around loosely. Sintra AI uses it. So does SendToTeam. Half the Amazon seller tools on the market have added "AI" to their name this year.

Here is the actual distinction that matters.

An AI tool does one thing well. It takes an input, runs a model, and produces an output. Helium 10's keyword suggestions. Seller Snap's game theory repricing. Perpetua's bid optimization. Each one is strong in its domain.

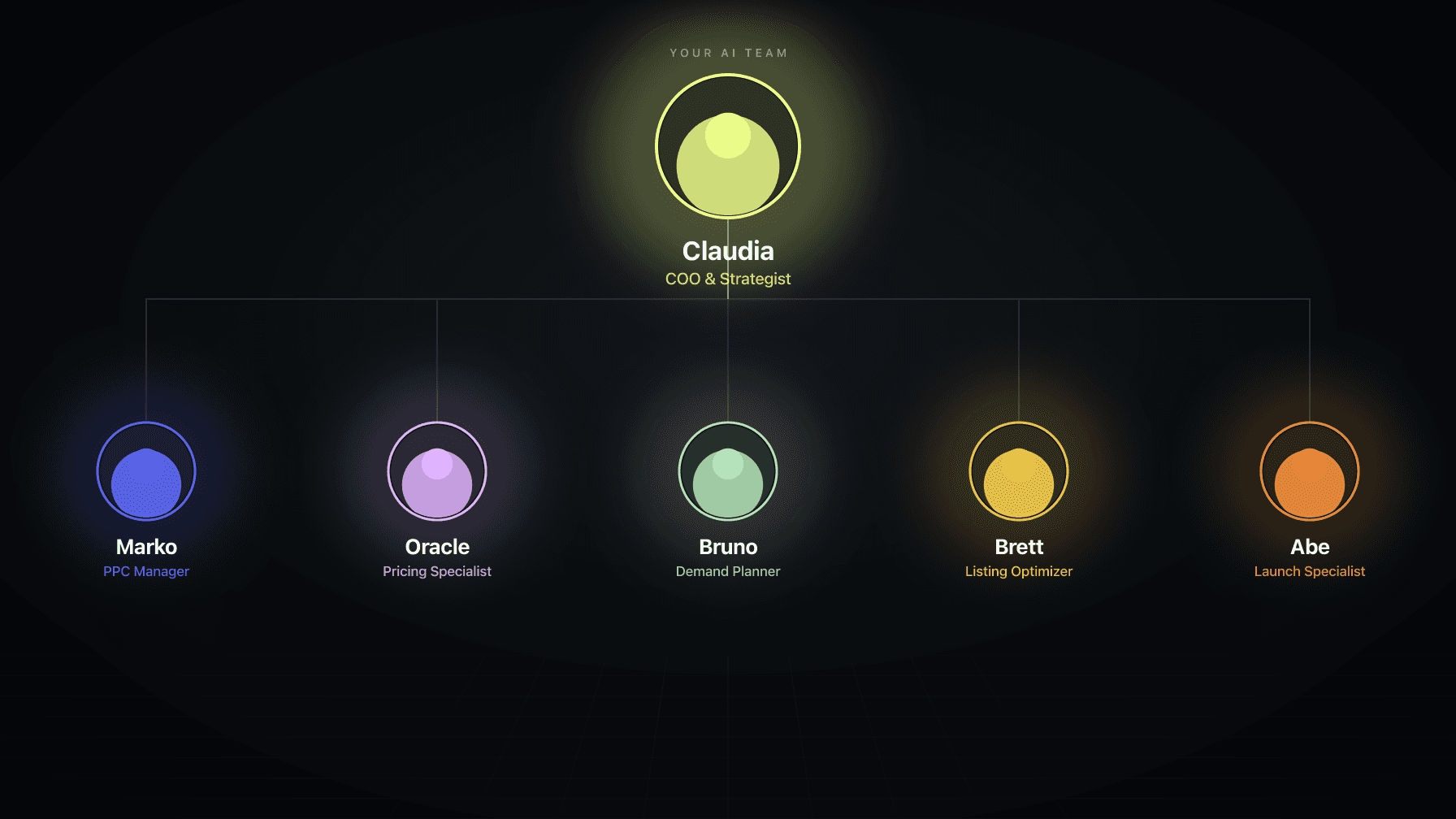

An AI employee does one thing well AND knows what every other employee is doing. When Bruno (the demand planner) detects that inventory on your top ASIN is dropping faster than expected, Marko (the PPC manager) automatically scales back ad spend on that product. (the pricing specialist) adjusts the price to slow velocity. (the COO) flags it in your morning Slack brief.

From reading to action

If the framework above sounds familiar, your Amazon account is probably carrying the same drag. Apply and we will show what Marko, Oracle, and Bruno would change in your first week.

Ran a 7-figure Amazon brand for a decade. Founded Skubana (acquired). Co-founded Prosper Show. 15+ years on Amazon.

Join the brands that replaced agencies and tools with AI employees.

No human had to notice the signal. No human had to coordinate the response. The employees handled it because they share context.

This is the same difference between hiring five freelancers who never talk to each other and hiring five employees who sit in the same room. The freelancers might each be brilliant. But if your pricing freelancer raises prices on Monday and your PPC freelancer keeps running the same bids on Tuesday, you burn money.

Not everything labeled "AI" is the same. There are three distinct levels, and understanding them saves you from paying for marketing claims.

You set the rules. The tool follows them. "If competitor price drops below $19.99, match it." "If ACoS exceeds 30%, lower bids by 10%."

This is what most repricers and basic PPC tools do. It is automation, not intelligence. The tool cannot reason about whether matching that competitor price makes sense given your current inventory level or your margin on that ASIN.

Most Amazon seller tools calling themselves "AI-powered" are actually Level 1 with a better user interface.

The tool learns from historical data and makes adjustments you did not explicitly program. Seller Snap's game theory identifies cooperative pricing strategies. Helium 10's Adtomic suggests bid changes based on conversion patterns. Perpetua's algorithm adjusts placements based on performance data.

This is genuinely useful. But it is still isolated. Each tool learns from its own data. Your PPC tool does not learn from your pricing data. Your repricer does not learn from your inventory data.

Level 2 tools create better decisions within their domain. They do not create better decisions across your business.

The AI understands your business context across multiple functions and makes decisions that account for pricing, PPC, inventory, and listings simultaneously.

This is where the "employee" framing becomes accurate. An employee does not just optimize their department. They factor in what other departments are doing.

When you run five Level 2 tools simultaneously, you become the human middleware. You are the one who has to notice that your repricer changed prices, then go tell your PPC tool, then check if inventory is still healthy. You are the coordination layer.

Level 3 eliminates that layer.

Most sellers underestimate the coordination problem because the losses are invisible. Nobody sends you an invoice for "decisions your tools made in isolation."

Here is what it actually looks like.

Sarah runs a beauty brand doing $180K/month on Amazon. She stacked Perpetua for PPC, Seller Snap for repricing, and SoStocked for inventory. Each tool was well-configured and performing well on its own metrics.

In February, Seller Snap raised prices on her best-selling serum from $24.99 to $29.99 to capture Valentine's Day demand. Good move in isolation. But Perpetua was still running the same bid strategy calibrated to $24.99 margins. At the higher price point, her conversion rate dropped 22%. Perpetua responded by increasing bids to compensate for the lower conversion, which raised her ACoS from 19% to 34%.

Meanwhile, SoStocked had flagged that same serum as "overstocked" and recommended she run a promotion to move units. Nobody connected the dots: raising prices while overstocked while scaling ad spend.

By the time Sarah manually reconciled these signals in March, she had burned $7,200 in ad spend that never needed to happen and sat on excess inventory that a coordinated system would have priced and promoted correctly from day one.

This is not a rare edge case. It is the default outcome when smart tools operate without shared context.

The math on coordination waste:

Signal missed | Typical cost per month |

|---|---|

PPC running on stocked-out ASINs | $1,500-$4,000 |

Bids not adjusting after price change | $2,000-$5,000 |

Over-ordering due to ad-inflated velocity | $3,000-$8,000 |

Under-pricing while running expensive ads | $1,000-$3,000 |

For sellers doing $100K+/month, the coordination tax is typically $5K-$15K/month in preventable waste.

The coordination is not magic. It is architecture.

Each AI employee at Profasee has three things standalone tools lack:

1. Shared context. Every employee sees the same real-time data: your COGS, your inventory levels, your ad spend, your pricing, your listing quality scores. When Oracle changes a price, Marko already knows. When Bruno flags a stockout risk, everyone sees it.

2. Event-driven reactions. Employees do not poll for changes on a schedule. They react to events. Price changed? Marko recalculates bids within minutes. Inventory dropped below safety stock? Marko reduces spend. Listing audit found a problem? Marko pauses spend on that ASIN until it is fixed.

3. Claudia, the coordinator. Claudia is the COO. She does not manage PPC or pricing directly. She ensures the employees are not contradicting each other. She produces your morning brief. She escalates decisions that exceed anyone's guardrails. She is the reason you can run a 5-minute morning instead of a 3-hour one.

This is not theoretical. It is how every healthy organization works. Each person owns their domain but they share a meeting room, a Slack channel, and a set of company priorities.

The biggest objection sellers have to AI automation is control. "What if it makes a mistake on my account?"

This is a reasonable concern. It is also why AI employees should never start with full autonomy.

Profasee uses a progressive autonomy model called the Trust Ladder:

Level 1 - Observe. The employee watches your account and tells you what it would do. No actions taken. You see the recommendations, the reasoning, and the expected outcome. This is read-only.

Level 2 - Recommend. The employee proposes specific actions with one-click approval. You review, approve or reject, and the employee learns from your decisions.

Level 3 - Act with guardrails. The employee can execute actions within boundaries you set. Bid changes up to 15%. Price adjustments within $2 of current price. Budget shifts under $50/day. Anything outside guardrails gets escalated.

Level 4 - Act with review. Full autonomy within guardrails. Actions happen automatically but you get a daily summary. One-click undo on anything from the last 72 hours.

Level 5 - Full trust. The employee operates like a seasoned team member. Guardrails still exist. Audit trail still logs everything. But you are reviewing weekly, not daily.

Most sellers reach Level 3 within two weeks. The key insight: the failure state is always "the employee does nothing." It never takes an action it cannot explain or undo.

Amazon updated its Business Solutions Agreement on March 4, 2026, with the first formal policy governing AI agents on the platform.

This matters for every seller using automation. Here is what you need to know:

SP-API only. All automated seller actions must flow through registered SP-API applications. Browser automation and Seller Central scraping are prohibited.

Three-tier action system. Routine operations (inventory sync, basic repricing) have minimal restrictions. Moderate actions (catalog updates, bid adjustments) require rate limiting and audit logging. High-impact actions (bulk listings, price changes over 20% in 24 hours) require documented human authorization.

Audit trail required. Every automated action needs a retrievable log with timestamp, action type, input data, and output. Minimum 12-month retention.

Self-identification. AI agents must clearly identify themselves as automated systems. Amazon retains the right to revoke access at any point.

The Amazon v. Perplexity case reinforced this: a federal court blocked Perplexity's Comet shopping agent from accessing Amazon accounts, ruling that user permission alone does not equal platform authorization.

Tools that operate through official APIs are compliant. Tools that scrape, automate browsers, or bypass API rate limits are not.

Profasee's AI employees operate exclusively through SP-API and Amazon Ads API. Every action has a timestamped audit trail. Human-in-the-loop controls are built into the trust ladder. This is not an afterthought. It is the architecture.

Standalone tools are better for: Single-function depth. If you only need PPC management and nothing else, a dedicated PPC tool might go deeper on that one function.

AI employees are better for: Operational coordination. If you are running PPC, pricing, and inventory simultaneously (which every serious seller is), coordination prevents the waste that isolated optimization creates.

The real comparison is not feature-for-feature. It is whether you want to be the integration layer between your tools.

Agencies provide human judgment, which matters. But they also provide inconsistency, slow response times, and unaccountable decisions.

An AI employee makes the same quality decision at 3am on a Saturday as it does at 10am on a Tuesday. An agency account manager does not.

Many sellers run AI employees alongside their agency in observe mode first. Within a week, they can compare what the AI would have done versus what the agency actually did.

If you are doing $30K/month and managing 10 SKUs, manual management is fine. The coordination problem does not bite until you have enough volume for miscoordination to cost real money.

At $100K+/month with 50+ SKUs, doing it yourself costs you 15-20 hours per week in Seller Central. That time has a value, and it is higher than the cost of AI employees.

Before switching to any AI employee platform, ask these five questions:

1. Does it share data across functions? If the PPC agent cannot see your inventory levels and pricing, it is a tool, not an employee.

2. What happens when it is wrong? Look for observe mode, guardrails, one-click undo, and a maximum failure state of "does nothing." If the worst case is "takes an action you cannot reverse," walk away.

3. How does it handle Amazon's Agent Policy? Ask for SP-API registration confirmation, audit trail capabilities, and human-in-the-loop controls. Any tool that cannot answer these questions specifically is a compliance risk.

4. Can you start with one function? Modular pricing (hire one employee, add more when value is proven) reduces risk. Platforms that require all-or-nothing commitments create unnecessary lock-in.

5. What does the morning look like? If you still need to log into Seller Central, check five dashboards, and reconcile conflicting data, the "AI employee" is just a tool with a new name. The real test is whether your morning review drops to 5 minutes.

The era of stacking five AI tools that do not talk to each other is ending. Not because the tools are bad. They are better than ever. But because the coordination gap between them is where the money leaks.

AI employees do not replace your tools by being better at any single function. They replace the work you do connecting those functions together. The 3am price change that nobody coordinated with PPC. The ad spend on a product about to stock out. The listing update that nobody told the pricing engine about.

That coordination work is invisible, unpaid, and worth more than most sellers realize.

Every day you operate as the human middleware between disconnected AI tools is a day you are doing work that a coordinated system should handle while you sleep.

What is the difference between an AI tool and an AI employee? An AI tool automates one function (PPC, repricing, or listings) and operates in isolation. An AI employee owns an operational domain and coordinates with other employees, sharing data across pricing, PPC, inventory, and listings to make decisions that account for your full business context.

Are AI employees safe for my Amazon account? Yes, when they operate through Amazon's official SP-API and Ads API and comply with the March 2026 Agent Policy. Look for observe mode, progressive autonomy, hard guardrails, and one-click undo. The failure state should always be "the employee does nothing," never uncontrolled action.

How much do AI employees for Amazon cost? Individual AI employees at Profasee range from $249/month (catalog auditor) to $399/month (PPC manager). A full team with coordinator is $1,595/month. Compare to standalone tool stacks at $1,500-$2,000/month or agencies at $8K-$15K/month.

Can I run AI employees alongside my current tools or agency? Yes. Start in observe mode to compare AI employee recommendations against your current tools or agency decisions. Most sellers run a 2-4 week parallel comparison before making any switch. There is no risk during observe mode because no actions are taken.

How long does it take to see results from AI employees? Most sellers see measurable coordination benefits within the first 30 days. The 5-minute morning (daily brief replacing hours in Seller Central) happens within the first week. Profit impact from eliminated coordination waste typically shows within 60 days.