Chad Rubin

April 21, 2026 · Updated May 11, 2026 · 11 min read

Operator notes by email

Short, opinionated takes on AI agents, Amazon PPC, pricing, and inventory. No fluff. About once a week.

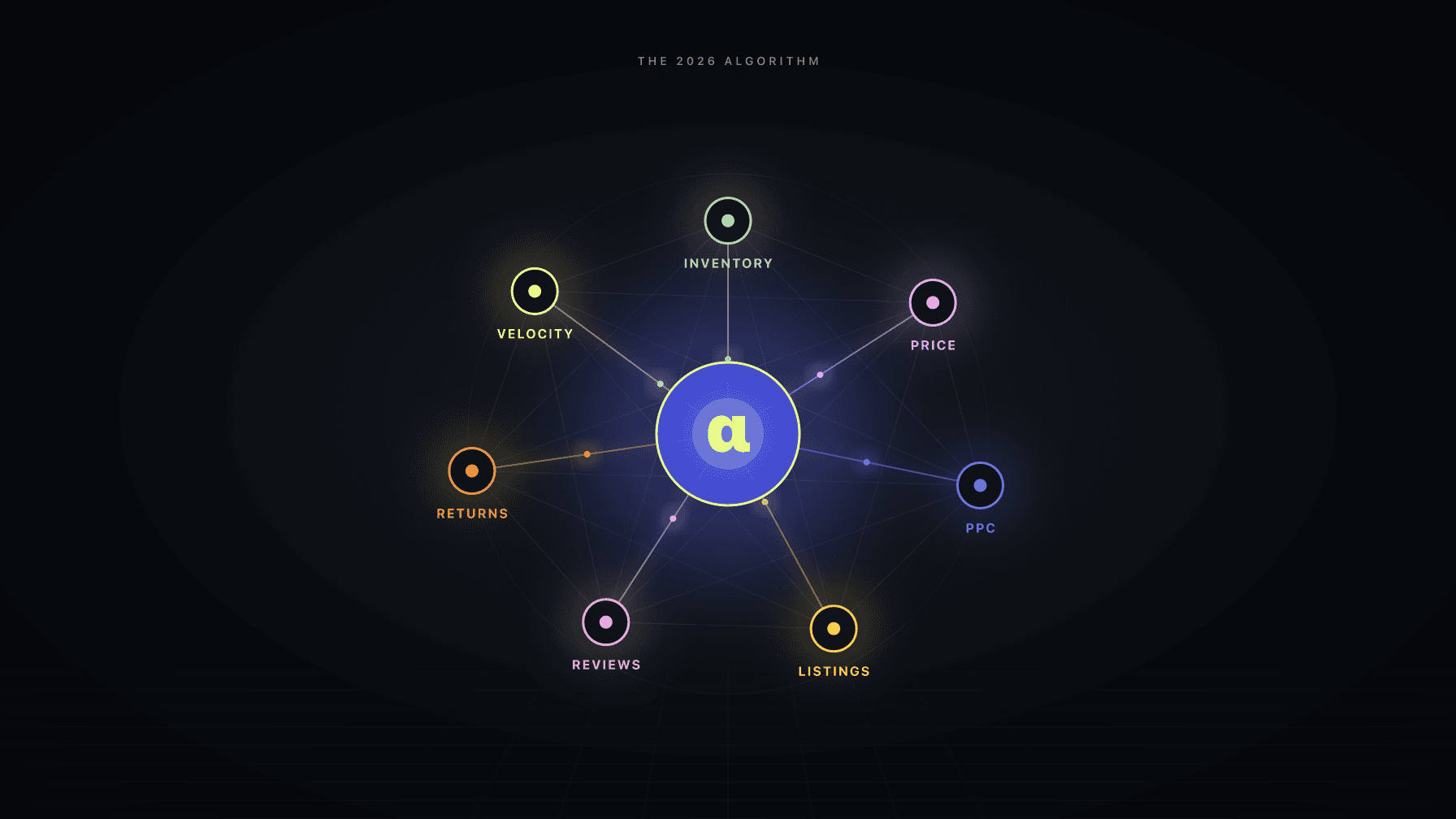

Amazon's search algorithm in 2026 ranks products based on cross-functional signals: inventory stability, conversion rate, return rates, session engagement, pricing competitiveness, and ad performance. These signals span pricing, PPC, inventory, and listings. No single-function tool can optimize across all of them. That is why siloed tool stacks are losing ground to coordinated systems.

The algorithm you optimized for in 2023 is not the algorithm running today. Amazon's deployment of COSMO (their common sense knowledge engine) and Rufus, their AI shopping assistant with 300 million+ users, fundamentally changed how products get discovered and ranked. Keywords still matter. But the algorithm now understands intent, evaluates business health, and penalizes operational dysfunction.

If your inventory goes in and out of stock, the algorithm notices. If your pricing is inconsistent with your ad conversion rates, the algorithm notices. If your return rate spikes because your listing overpromises, the algorithm notices. These are not keyword signals. They are operational signals. And optimizing them requires tools that share data across functions.

This is the shift most Amazon sellers have not internalized yet: the algorithm stopped rewarding individual metric optimization. It started rewarding operational coherence. The Amazon algorithm changes in 2026 are, in one sentence, a move from keyword-match ranking to business-health ranking — and that is why the tools that worked last year do not work this year.

Key Takeaways

The Amazon algorithm changes in 2026 did not arrive in a single release. They arrived as the accumulated weight of three distinct eras of ranking logic, each one adding signals the previous tool stack could not see.

From reading to action

If the framework above sounds familiar, your Amazon account is probably carrying the same drag. Apply and we will show what Marko, Oracle, and Bruno would change in your first week.

Ran a 7-figure Amazon brand for a decade. Founded Skubana (acquired). Co-founded Prosper Show. 15+ years on Amazon.

Join the brands that replaced agencies and tools with AI employees.

Amazon's original A9 algorithm was relatively simple. The products that sold the most units ranked highest. Keywords in your title and backend drove discoverability. Sales velocity was king. If you could drive enough sales (even at a loss), you could rank and then scale back spending.

This rewarded aggressive PPC spend, promotional pricing, and launch tactics. It did not particularly reward operational excellence. A seller with inconsistent inventory, volatile pricing, and mediocre conversion rates could still rank well if they drove enough volume.

Amazon began incorporating more signals. Seller authority, external traffic, conversion rate, and click-through rate all gained weight. The algorithm became harder to game with pure velocity.

But the tools did not change. PPC tools still optimized PPC. Repricers still optimized price. Inventory tools still tracked inventory. Each tool optimized its own signal in isolation.

This is where we are now. Two major deployments changed the game:

COSMO (Common Sense Knowledge Engine): Amazon's knowledge graph that understands product relationships, attributes, and context beyond keywords. COSMO knows that "waterproof hiking boots for wide feet" is a specific query that requires matching on multiple attributes, not just keyword overlap. It evaluates whether your product actually delivers what the search implies.

Rufus AI Shopping Assistant: Rufus does not search. Rufus shops. With 300 million+ users, Rufus is now a significant discovery channel. When a customer asks Rufus "what is the best protein powder for weight loss?", Rufus evaluates products across reviews, features, price, seller reliability, and conversion data. Keyword stuffing does not impress an LLM that reads your reviews and compares your claims to your return rate.

Together, COSMO and Rufus create an algorithm that rewards coherence across every dimension of your seller operations. Here is what it weighs now:

What the algorithm measures: Consistency of availability. Frequency, duration, and timing of stockouts. Time-to-restock after out-of-stock events.

How it affects ranking: Frequent stockouts trigger ranking suppression that persists 4-6 weeks after restocking. The algorithm treats inventory instability as a proxy for seller unreliability — which is why Amazon's own Inventory Performance Index (IPI) now factors into storage limits and ranking visibility. A product that goes in and out of stock gets demoted relative to competitors with consistent availability.

Why siloed tools fail: Your inventory tool can tell you when you will stock out. But it cannot prevent the stockout by adjusting pricing (to slow velocity) or reducing PPC (to lower demand). By the time a standalone inventory tool alerts you, the damage is in motion.

Coordinated solution: Bruno detects stockout risk 3-4 weeks out. Oracle raises price to slow velocity. Marko reduces ad spend. The stockout is prevented without human intervention.

What the algorithm measures: What percentage of visitors purchase. Broken into organic conversion and ad conversion, both weighted into overall ranking.

How it affects ranking: Higher conversion rate signals product-market fit. The algorithm rewards products that convert well and suppresses products that generate traffic without converting.

Critical 2026 change: Ad conversion rates now feed directly back into organic ranking. Products that convert well on ads get an organic lift. This means your PPC performance is not isolated. It shapes your organic position.

Why siloed tools fail: Conversion rate is driven by multiple factors: price, listing quality, review score, competitive positioning, and ad targeting. A PPC tool optimizing for clicks does not know that a price change just made those clicks less likely to convert. A repricer optimizing price does not know that the lower conversion will suppress organic ranking through the ad conversion feedback loop.

Coordinated solution: When Oracle adjusts price, Marko recalibrates bids to the new conversion expectations. When Brett (listing auditor) flags a listing quality issue affecting conversion, Marko reduces spend until it is fixed. The conversion signal stays healthy because all inputs are coordinated.

What the algorithm measures: Post-purchase return frequency, return reasons, and return rate relative to category average.

How it affects ranking: High return rates suppress ranking. Amazon expanded return processing fees to nearly every category in 2026. The algorithm now treats returns as a strong negative signal.

Why siloed tools fail: Return rate is a product and listing quality signal, not something PPC or pricing tools control. But pricing affects returns indirectly: products priced significantly above perceived value generate more returns from buyers who feel they overpaid.

Coordinated solution: Brett monitors listing quality and flags mismatches between listing claims and product reality (a common return driver). Oracle factors return rate data into pricing decisions. If a product has a 12% return rate (above category average), aggressive pricing may attract price-sensitive buyers who are more likely to return.

What the algorithm measures: Time on page, scroll depth, A+ Content interaction, video views, question engagement.

How it affects ranking: Products with higher session engagement rank better because engagement signals genuine buyer interest rather than accidental clicks.

Why siloed tools fail: Session engagement is driven by listing content quality. Most PPC tools do not evaluate listing quality as an input to bid decisions. They drive traffic to listings regardless of whether the listing is optimized for engagement.

Coordinated solution: Brett audits listing quality. When engagement metrics are weak, Marko adjusts spend (no point driving expensive traffic to a poorly optimized listing). Claudia flags the engagement issue in the morning brief with specific recommendations.

What the algorithm measures: Your price relative to similar products in the category. Historical price consistency. Sudden large price changes.

How it affects ranking: Amazon's algorithm promotes products that offer competitive value. Extreme pricing (too high or too low relative to category norms) raises flags. The March 2026 Agent Policy caps automated price changes at 20% per day, and the algorithm likely considers price stability as a ranking signal.

Why siloed tools fail: Repricers optimize price without considering how the new price affects ad conversion, which affects organic ranking, which affects total demand. A "competitive" price set by a repricer might look good in isolation but create a negative feedback loop through the ad conversion channel.

Coordinated solution: Oracle prices for profit, not just competitiveness. Marko adjusts bids to match the new margin. The pricing decision accounts for the downstream ranking effects, not just the immediate competitive position.

What the algorithm measures: CTR on ads, ad conversion rate, ACoS trends, ad quality score. Amazon's own advertising strategy guidance has shifted to treat these metrics as organic-ranking inputs, not just ad-auction inputs.

How it affects ranking: High-performing ads signal a product that Amazon should promote organically. Low-performing ads signal a product that Amazon should not waste organic real estate on.

Why siloed tools fail: Ad performance is a function of bid strategy, targeting, listing quality, and pricing. A PPC tool can optimize bids and targeting. It cannot fix a listing or adjust a price.

What the algorithm measures: Account age, order defect rate, late shipment rate, cancellation rate, customer service metrics.

How it affects ranking: Trusted sellers get ranking preference. This is Amazon's way of reducing risk in product recommendations, especially through Rufus.

Rufus is not a search bar with a chatbot wrapper. It is a shopping assistant that reasons about products.

When a customer asks Rufus "what is the best ergonomic office chair under $300?", Rufus:

This is fundamentally different from keyword search. You cannot SEO your way into a Rufus recommendation by stuffing "ergonomic office chair" into your title. Rufus reads your reviews, evaluates your claim against buyer feedback, and considers your operational track record.

The sellers who show up in Rufus recommendations are sellers with:

Every one of these signals is cross-functional. Optimizing for Rufus requires optimizing across PPC, pricing, inventory, and listings simultaneously.

Here is what happens when you optimize each signal in isolation:

Your PPC tool drives aggressive traffic to maximize ad sales velocity. It succeeds. ACoS looks good.

But the aggressive traffic at the current price produces a 6% conversion rate (below category average of 9%). The low conversion rate suppresses organic ranking.

Your repricer drops the price by 12% to improve conversion. It works. Conversion jumps to 10%.

But the PPC tool does not know the price dropped. Bids are still calibrated to the old margin. ACoS spikes. The PPC tool responds by cutting bids, which reduces traffic, which slows velocity, which further hurts organic ranking.

Your inventory tool sees velocity declining and pushes back the reorder date. Two weeks later, the PPC tool finds its footing at the new price and scales spend back up. Velocity accelerates. The inventory tool's forecast was based on the low-velocity period. Now you are heading toward a stockout.

Each tool made a locally optimal decision. Together, they created a mess. The algorithm sees all of it: inconsistent conversion, volatile pricing, unstable velocity, inventory risk. Ranking drops.

This is not hypothetical. This is the default outcome when smart tools operate without shared context.

Same scenario, but with coordinated AI employees:

Oracle adjusts price based on demand elasticity, margin targets, inventory level, and competitive data. Price drops 8% (not 12%, because Oracle knows a smaller reduction is sufficient given the current ad spend level).

Marko immediately recalibrates bids to reflect the lower margin. Bids tighten by 6% on mid-funnel keywords, hold steady on high-converting exact match keywords. Total ad spend stays within 3% of the previous level.

Bruno updates the velocity forecast to reflect the expected conversion improvement from the price drop. Reorder timing adjusts automatically.

Claudia logs everything in the morning brief: "Oracle reduced price on ASIN X by 8%. Marko adjusted bids on 18 keywords to maintain 28% target ACoS at the new margin. Bruno projects 12% velocity increase and moved reorder date forward by 6 days. No action required."

The algorithm sees: Stable pricing adjustment (not volatile). Maintained ad conversion (not tanked). Consistent availability (no stockout risk). Coherent signals across all dimensions.

Result: Organic ranking holds or improves. Total profit optimizes rather than oscillating.

Profasee's AI employees were designed for this algorithm. Each employee optimizes one function, but they all share data and react to each other's decisions. The result is the operational coherence that the 2026 algorithm rewards.

Oracle prices for profit. Marko bids based on current margin. Bruno forecasts based on actual PPC and pricing plans. Brett audits listings for the quality signals Rufus evaluates. Claudia coordinates everything in one morning brief.

The algorithm does not care which tool you use. It cares whether your operations are coherent. Coordinated AI employees make them coherent by default. If there is one thing to take from this breakdown of the Amazon algorithm changes in 2026, it is this: the sellers who win from here are the ones whose tools stop acting like strangers to each other.

Amazon has never officially named or detailed its ranking algorithm. "A9" and "A10" are industry terms used to describe observed changes. What we know comes from reverse-engineering ranking factors through testing and data analysis. Amazon has confirmed that factors like inventory availability, pricing competitiveness, and ad performance influence ranking, but exact weightings are not disclosed.

Rufus has over 300 million users and drove approximately $12 billion in incremental sales in 2025. Rufus-engaged shoppers have 60% higher purchase completion rates. It is a significant and growing discovery channel. Optimizing for Rufus is not optional for serious sellers.

The March 2026 Agent Policy caps automated price changes at 20% within 24 hours. Within that limit, moderate price fluctuations (5-15%) are normal and expected. The algorithm likely views extreme price volatility negatively, but regular dynamic pricing within reasonable bounds is not penalized.

Based on data from Profasee sellers: a 1-week stockout typically requires 3-4 weeks of recovery. A 2-week stockout requires 5-6 weeks. Recovery includes both the time for the algorithm to restore ranking and the additional PPC spend needed to rebuild sales velocity. Prevention is always cheaper than recovery.

Yes, at small scale. Manual coordination across pricing, PPC, inventory, and listings is possible for sellers managing 10-20 SKUs who can dedicate 2-3 hours daily to cross-functional review. Beyond that scale, the volume of decisions and the speed required for real-time coordination exceeds what manual processes can handle.